G'day:

I've got another interesting reader comment to address today. My namesake Adam Tuttle has sent through this wodge of questions, attached to my earlier article "TDD is not a testing strategy":

You weren't offering to teach anyone about TDD in this post, but hey... I'm here, you're here, I have questions... Shall we?

One of the things I struggle with w/r/t TDD is the temptation to test every. single. action. For example, a large, complex form-save. Dozens, possibly 100 fields. Whether that ends up saved via ORM entities or queries, chances are good that since the form is so large the logic is a bit beyond a dirt-simple single-CRUD query. Multiple relationships, order of operations, permissions to modify different fields, etc.

My gut reaction is to skip the unit-testing layer and jump up to an integration or E2E test: submit the form, then view the detail-view (or re-open the record for editing) and assert that the values changed have persisted and they are what you're expecting, where you're expecting it, on the latter view.

BUT doesn't that almost entirely eliminate the possibility of using mocks to make the tests fast(er), the base-state predictable, and to not leave a mess in a designated testing db/env? My (and I mean this literally!) feeble, bad-at-TDD brain doesn't comprehend what a good solution is to this problem.

Unless the solution is to not test that aspect of the code? I fully subscribe to the "100% test coverage is a fools errand" ethos, so perhaps this is something that should just not be tested; and save the testing for things that are doing "interesting" algorithmic work? (not-crud)

Since I specifically mentioned permission to edit a certain field in my example, I guess I should say that stands out to me as something I would likely want to test. Thinking about it now, my brain wants to architect a system that accepts the user object and the field name as inputs and returns a boolean for editable or not. Easy enough to implement and you're basically changing the conditional in the save method from "if user has X permission, entity.setProperty(newval)" to "if evaluatePermissions(user, property) = true, entity.setProperty(newval)", so there's no big mental leap to the next developer to read the code... but it also seems hairy to separate the permission logic from the form-save logic, not because of the separation, but because it leads towards combining the permission logic of lots of disparate and unrelated forms. I'm not seeing how that could be cleanly implemented.

So yeah. There's a can of worms for you. What do you make of that?

Nice one. There's a lot of work there, so I am going to approach it how I'd approach addressing any other requirements: a bit at a time. Like I'm doing TDD. Except I've NFI how I can write tests for a blog article, so just imagine that part. Also remember that TDD is not a testing strategy, it's a design strategy, so my TDD-ish approach here is focusing on identifying cases, and addressing them one at a time. OKOK, this is torturing my fixation with TDD a bit. Sorry.

You weren't offering to teach anyone about TDD in this post

You weren't offering to teach anyone about TDD in this post

. OK, so first point. I'm always open to excuses to think about TDD practices, and how we can use them to address our work. So don't worry abou that.

It needs to save a large form

For example, a large, complex form-save. Dozens, possibly 100 fields.

For my convenience I am going to interpret this as two separate things: the form, and the code that processes a post request. I suspect you were only meaning the latter. But, really, the same approach applies to both.

In seeing a large HTML form, you are not using a TDD mindset: how do I test that huge thing?! Using the TDD mindset, it's not a huge thing. It starts off being nothing. It starts off perhaps with "requests to /myForm.html return a 200-OK". From there it might move on to "It will be submitted as a POST to /processMyForm", and the to "after a successful form submission the user is redirected to /formSubmissionResults.html, and that request's status is 201-CREATED". Small steps. No form fields at all yet. But we have tested that requirements of your work have been tested (and implemented and pass the tests).

Next you might start addressing a form field requirement: "it has a text field with maximum length 100 for firstName". Quickly after that you have the same case for lastName. And then there might be 20 other fields that are all text and all have a sole constraint of maxLength, so you can test all of those really quicky but with still the same amount of care with a data provider that passes the test the case variations, but otherwise is the same test. This is still super quick, and your case still shows that you have addressed the requirement. And you can demonstrate that with your test output:

✓ should have a required text input for fullName, maxLength 100, and label 'Full name'

✓ should have a required text input for phoneNumber, maxLength 50, and label 'Phone number'

✓ should have a required text input for emailAddress, maxLength 320, and label 'Email address'

✓ should have a required password input for password, maxLength 255, and label 'Password'

✓ should have a required workshopsToAttend multiple-select box, with label 'Workshops to attend'

✓ should list the workshop options fetched from the back-end

✓ should have a button to submit the registration

- should leave the submit button disabled until the form is filled

7 passing (48ms)

1 pending

MOCHA Tests completed successfully

(I've lifted that from the blog article I mention lower down).

Not all form fields are so simple. Some need to be select boxes that source their data from [somewhere]. "It has a select for favouriteColour, which offers values returned from a call to /colours/?type=favourite". This needs better testing that just name and length. "It has a password field that only accepts [rules]". Definitely needs testing discrete from the other tests. Etc

Your form is a collection of form fields all of which will have stated requirements. If the requirements have been stated, it stands to reason you should demonstrate you've met the requirements. Both now in the first iteration of development, and that this continues to hold true during subsequent iterations (direct or indirect: basically new work doesn't break existing tests).

I cover an approach to this in article "Vue.js: using TDD to develop a data-entry form". It's a small form, but the technique scales.

Bottom line when using TDD you don't start with a massive form.

It's a similar story on the form submission handler. The TDD process doesn't start with "holy f**k 100 form fields!", it starts of with a POST request. or it might start with a controller method receiving a request object that represents that request. Each value in that request must have validation, and you must test that, because validation is a) critical, b) fiddly and error-prone. But you start with one field: firstName must exist, and must be between 1-100 character. You'd have these cases:

- It's not passed with the request at all (fail);

- It's passed with the request but its value is empty (fail);

- It's passed with the request and its length is 1 (pass);

- It's passed with the request and its length is 100 (pass);

- It's passed with the request and its length is 101 (fail);

These are requirements your client has given you: You need to test them!

The validation tests are perhaps a good example of where one might use a focused unit test, rather than a functional test that actually makes a request to /processMyForm and analyses the response. Maybe you just pass a request object, or the request body values to a validate method, and check the results.

Once validation is in place, you'd need to vary the response based on those results: "when validation fails it returns a 400-BAD-REQUEST"; "when validation fails it returns a non-empty-array errors with validation failure details", etc. All actual requirements you've been given; all need to be tested.

Then you'd move on to whatever other business logic is needed, step by step, until you get to a point where yer firing some values into storage or whatever, and you check the expected values for each field are passed to the right place in storage. Although I'd still use a mock (or spy, or whatever the precise term is), and just check what values it receives, rather than actually letting the test write to storage.

It also has end-to-end acceptance tests

At this point you can demonstrate the requirements have been tested, and you know they work. I'd then put an end-to-end happy path test on that (maybe all the way from automating the form submission with a virtual web client, maybe just by sending a POST request; either is valid). And then I'd do an end-to-end unhappy path test, eg: when validation fails are the correct messages put in the correct place on the form, or whatever. Maybe there are other valid variations of end-to-end tests here, but I would not think to have an end-to-end test for each form field, and each validation rule. That'd be fiddly to write, and slow to run.

It does need to cover all the behaviour

I fully subscribe to the "100% test coverage is a fools errand" ethos

. Steady on there. There's 100% and there's 100%. This notion is applied to lines-of-code, or 100% of methods, or basically implementation detail stuff. And it's also usually trotted out by someone who's looking at the code after it's been done, and is faced with a whole pile of testing to write and trying to work out ways of wriggling out of it. This is no slight on you, Adam (Tuttle), it's just how I have experienced devs rationalise this with me. If one does TDD / BDD, then one is not thinking about lines of code when one is testing. One is thinking about behaviour. And the behaviour has been requested by a client, and the behaviour needs to work. So we test the behaviour. Whether that's 1 line of code or 100 is irrelevant. However the test will exercise the code, because the code only ever came into existence to address the case / behaviour being delivered. Using TDD generally results in ~100% of the code being covered because you don't write code you don't need, which is the only time code might not be covered. How did that code get in there? Why did you write it? Obviously it's not needed so get rid of it ;-).

The key here is that 100% of behaviour gets covered.

Nothing is absolute though. There will be situations where some code - for whatever reason - is just not testable. This is rare, but it happens. In that case: don't get hung up by it. Isolate it away by itself, and mark it as not covered (eg in PHPUNit we have @codeCoverageIgnore), and move on. But be circumspect when making this decision, and the situations that one can't test some code is very rare. I find devs quite often seem to confuse "can't" with "don't feel like ~". Two different things ;-)

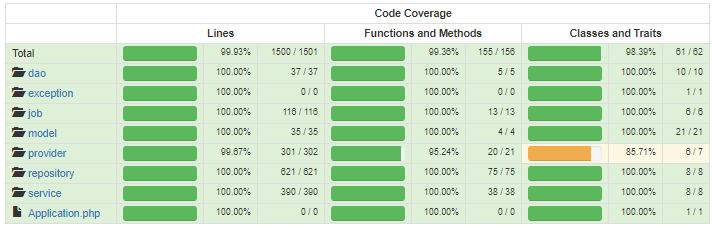

I'll also draw you back to an article I wrote ages ago about the benefits of 100% test coverage: "Yeah, you do want 100% test coverage". TL;DR: where in these two displays can you spot then new code that is accidentally missing test coverage:

Accidents are easy to spot when a previously all-green board starts being not all-green.

It uses emergent design to solve large problems

[My] brain wants to architect a system that [long and complicated description follows]

. One of the premises of TDD is that you let the solution architect itself. I'm not 100% behind this as I can't quite see it yet, but I know I do find it really daunting if my requirement seems to be "it all does everything I need it to do", and I don't know where to start with that. This was my real life experience doing that Vue.js stuff I linked to above. I really did start with "yikes this whole form thing is gonna be a monster!? I don't even know where to start!". I pushed the end result I thought I might have to the back of your mind, especially the architectural side of things (which will probably more define itself in the refactor stage of things, not the red / green part).

And I started by adding a route for the form, and then I responded to request to that route with a 200-OK. And then moved on to the next bite of the elephant.

HTH.

--

Adam (Cameron)